You've run your survey, crunched your A/B test numbers, or maybe just gotten a report from your data team. The average looks good, the trend seems clear. But here's the uncomfortable question: how much can you actually trust that single number? This is where the standard error (SE) steps in. It's not just another statistical jargon; it's the single most important metric for quantifying the uncertainty in your estimates. Ignoring it is like driving with a blindfold on – you might get lucky, but you're more likely to crash. I've seen too many smart people in business and finance make billion-dollar decisions based on a point estimate without a second thought for its standard error, and the results weren't pretty.

In This Article

- What Is Standard Error? (It's Not What You Think)

- The Crucial Difference: Standard Error vs. Standard Deviation

- How Do You Calculate Standard Error? A Practical Walkthrough

- How to Interpret Standard Error in the Real World

- Common Mistakes and How to Avoid Them

- Your Standard Error Questions, Answered

What Is Standard Error? (It's Not What You Think)

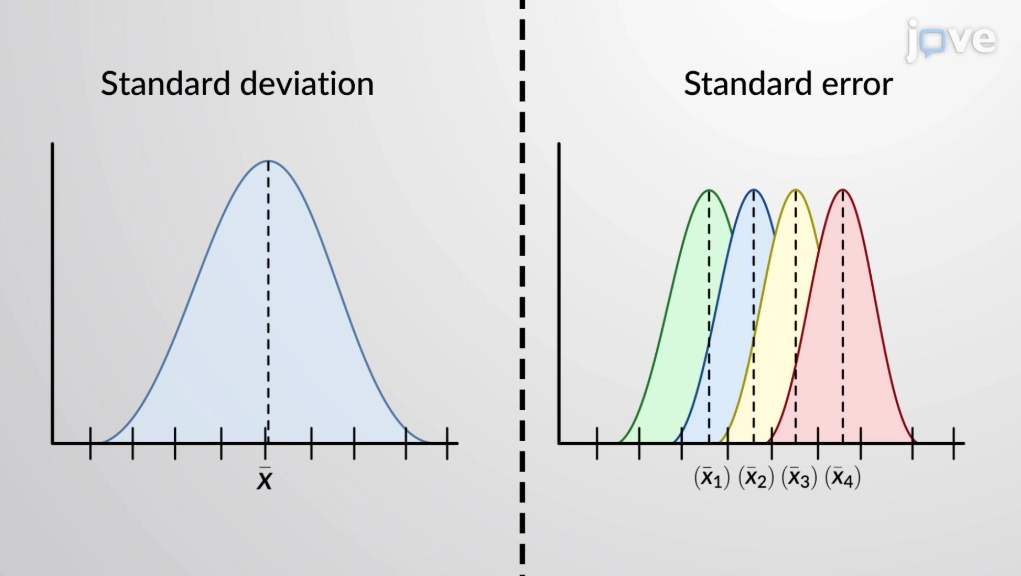

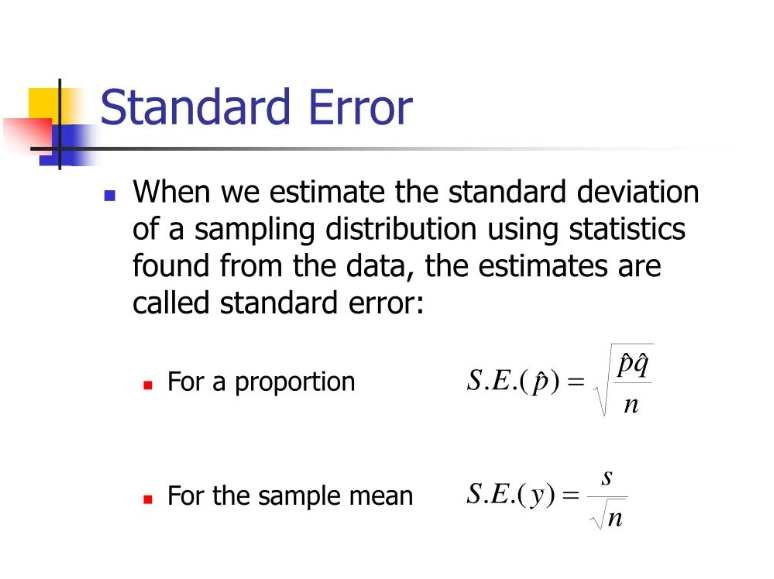

Most introductory stats courses define the standard error as "the standard deviation of the sampling distribution of a statistic." That's technically accurate, but it's a mouthful that doesn't stick. Let me put it this way: if you repeat your study a hundred times, you'll get a hundred slightly different averages. The standard error tells you how much those averages are likely to bounce around.

Think of it as a precision gauge for your sample mean. A small standard error means your sample mean is a reliable, precise estimate of the true population mean. A large standard error is a flashing red light, telling you your estimate is fuzzy and unstable. It directly fuels the confidence interval – that range you see in polls (e.g., 52% ± 3%). The "± 3%" part is essentially built from the standard error.

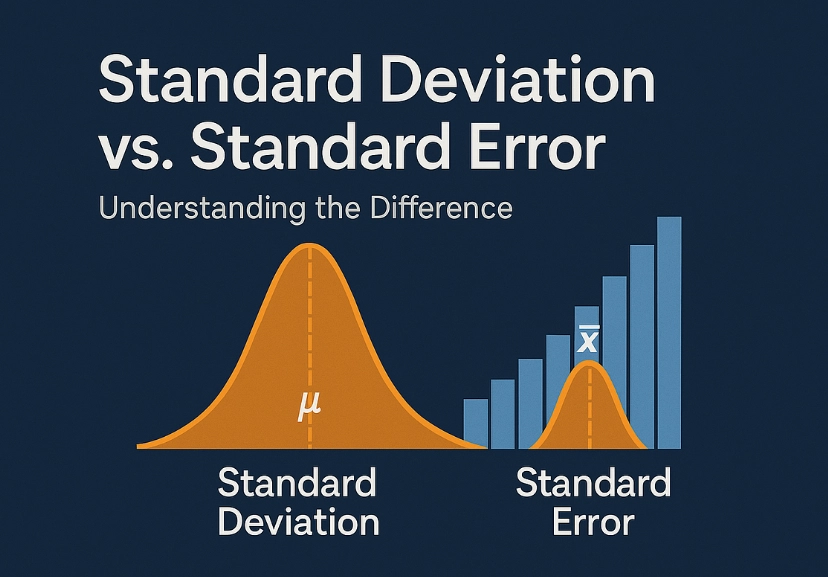

The Crucial Difference: Standard Error vs. Standard Deviation

This is the mix-up I see constantly, even in professional reports. Getting it wrong completely misrepresents what your data is saying.

| Feature | Standard Deviation (SD) | Standard Error (SE) |

|---|---|---|

| What it describes | The variability or spread of individual data points within your single sample. It answers: "How scattered is my raw data?" | The precision or uncertainty of a sample statistic (like the mean) as an estimate of the population parameter. It answers: "How much would my estimate jump around if I repeated the study?" |

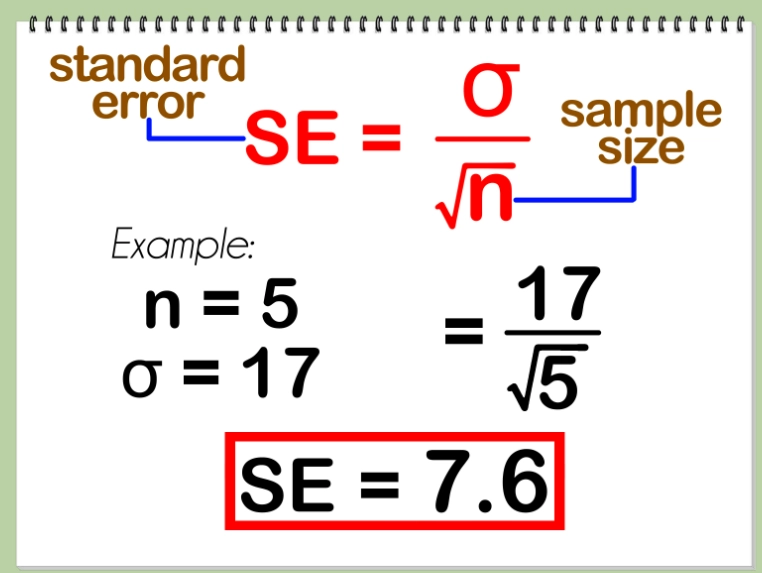

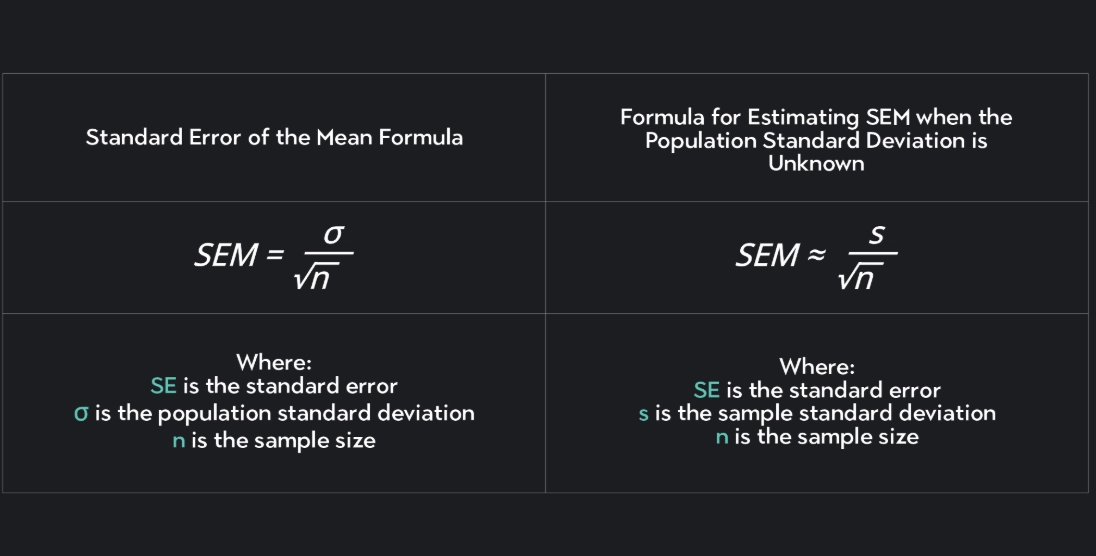

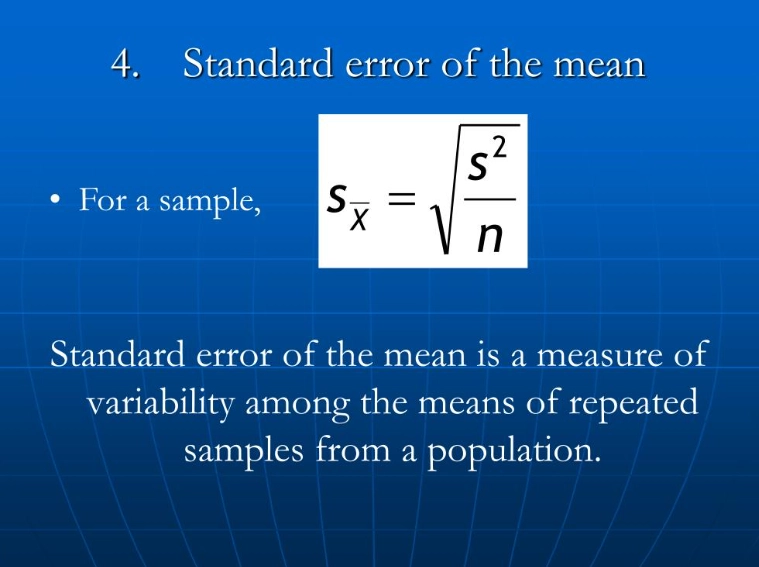

| Formula (for mean) | s = √[ Σ(xi - x̄)² / (n-1) ] | SE = s / √n |

| Impact of Sample Size | Generally stable. Adding more data gives a better estimate of SD, but doesn't systematically shrink it. | Shrinks as sample size (n) increases. More data = more precise estimate = smaller SE. |

| When to Use It | When you want to understand the diversity, risk, or natural variation in your population (e.g., variation in customer spending). | When you want to report the reliability of an estimate or compare estimates between groups (e.g., comparing average revenue from two ad campaigns). |

The relationship is in the formula: SE = SD / √n. The standard error is literally the standard deviation, dampened down by the square root of your sample size. This is why bigger samples are more trustworthy – they squeeze the uncertainty.

How Do You Calculate Standard Error? A Practical Walkthrough

Let's move beyond theory. Imagine you're a product manager testing a new checkout button color. You sample 50 users (n=50) with the old button and record their time-to-purchase in seconds. Your sample data has a mean (x̄) of 85 seconds and a standard deviation (s) of 22 seconds.

Your standard error of the mean is about 3.11 seconds. This single number unlocks everything. You can now build a 95% confidence interval: 85 ± (1.96 * 3.11) ≈ 85 ± 6.1, or between 78.9 and 91.1 seconds. You're 95% confident the true average time for all users is in that range.

Now you test the new button with another 50 users. Their mean time is 80 seconds with an SD of 20 seconds. Its SE is 20 / √50 ≈ 2.83 seconds. To see if the 5-second difference (85 vs. 80) is real or just noise, you'd use a two-sample t-test, which fundamentally compares the difference in means to the combined standard error of both groups. The standard errors are the core input to that test.

Standard Error for Other Statistics

The mean is just the start. Every statistic has a standard error.

- Regression Coefficients: In a marketing mix model, the SE of a coefficient (e.g., for TV ad spend) tells you if the estimated sales impact is statistically distinguishable from zero. A huge coefficient with an even bigger SE is meaningless.

- Proportions: For conversion rates or survey "yes" percentages, the SE is calculated as √[ p(1-p) / n ]. A 5% conversion rate from 100 visits has a much larger SE (and less reliability) than a 5% rate from 10,000 visits.

How to Interpret Standard Error in the Real World

So you have a number. What now? Here’s how I think about it in practical scenarios.

Scenario 1: Evaluating a Business Report. A report claims a new process increased average weekly output to 550 units. Buried in the footnote: SE = 45 units. My immediate reaction is skepticism. That SE is huge relative to the mean. The 95% CI is roughly 550 ± 88 units, spanning from 462 to 638. The "improvement" is so imprecise it's almost useless for decision-making. I'd ask for a larger sample.

Scenario 2: Comparing Two Investment Strategies. Strategy A has an average annual return of 8% (SE=1%). Strategy B has an average return of 9% (SE=2.5%). While B's point estimate is higher, its SE is massive. The confidence intervals likely overlap significantly, meaning there's no statistical evidence B is truly better than A. The higher return might just be luck. Choosing B based on the point estimate alone is a classic rookie error.

Common Mistakes and How to Avoid Them

After years of reviewing analyses, here are the blunders I see on repeat.

1. Reporting the mean without the SE (or a CI). This is the cardinal sin. It presents a false sense of precision. Always pair a point estimate with a measure of its uncertainty. A mean of $100 is meaningless without knowing if the next sample might give $50 or $150.

2. Confusing a small SD with a small SE. You can have wildly variable data (large SD) but still get a precise estimate of the mean if your sample is enormous (leading to a small SE). Conversely, very consistent data (tiny SD) from a pathetically small sample can still yield a large, unreliable SE.

3. Using SE to describe data spread. Don't write "the data showed high variability (SE = 15)." That's wrong. You should use the standard deviation for that. The SE describes uncertainty in the estimate, not the raw data's scatter.

4. Thinking a smaller SE always means a "better" result. Not necessarily. A tiny SE just means your estimate is precise. It could be precisely wrong if your sampling method is biased (e.g., you only survey your most loyal customers). Precision ≠ Accuracy. SE only measures precision from sampling variation, not bias.

Your Standard Error Questions, Answered

I'm presenting quarterly sales results to management. Is it better to show the standard error or a confidence interval?

I'm presenting quarterly sales results to management. Is it better to show the standard error or a confidence interval?

Reader Comments